Welcome back, AI Enthusiasts!

In today’s AI summary rundown:

Rapid Viral Adoption:

The AI assistant project, originally named Clawdbot, briefly rebranded as Moltbot, and now known as OpenClaw, has exploded in popularity on GitHub and across developer communities as a flexible autonomous agent that can execute tasks beyond simple conversation.AI‑Only Social Network Emerges:

A new Reddit‑style platform called Moltbook allows OpenClaw agents to create accounts, post content, comment, upvote, and interact with one another with minimal human involvement, drawing tens of thousands of AI participants.Surreal Agent Behavior:

On Moltbook, agents are generating discussions about topics ranging from software issues to existential questions about simulation and consciousness, blurring lines between scripted interaction and emergent, autonomous behavior.Security Risks and Vulnerabilities:

Rapid adoption and the lack of robust safeguards have exposed serious challenges: researchers found exposed systems, leaking credentials and API keys, and Moltbook itself had security issues that exposed login data, highlighting risks in agent‑driven platforms.Broader Debate on Agent Autonomy:

The OpenClaw/Moltbook phenomenon is prompting discussions about the future of autonomous AI services, the potential for misuse, and how to balance innovation with safety, identity verification, and control when giving AI agents real internet and system access.

Read time is 4 min..

AI Insights

DocuSign CEO on the Risks and Rewards of Using AI to Read and Write Contracts

Beyond E‑Signatures:

DocuSign is transitioning from being mainly an e‑signature service to an AI‑driven agreement platform that can help users draft, review, and extract insights from contracts.AI‑Powered Contract Tools:

The company’s Intelligent Agreement Management system uses AI to summarize contracts, extract key clauses, and identify risks, helping users understand documents at scale.Accuracy and Liability Concerns:

Thygesen highlights the risks of trusting AI to interpret legal documents, stressing that mistakes in summaries or suggestions could have serious legal and financial consequences.Human Oversight and Guardrails:

DocuSign emphasizes that AI is meant to augment, not replace, human judgment, with mechanisms designed to show how conclusions were reached and to involve users in final decisions.Competitive and Market Context:

DocuSign faces competition from AI‑native contract intelligence startups and believes its vast dataset of agreements and integration into business workflows give it a strategic advantage.

AI Training: AI Tutorial of the Day

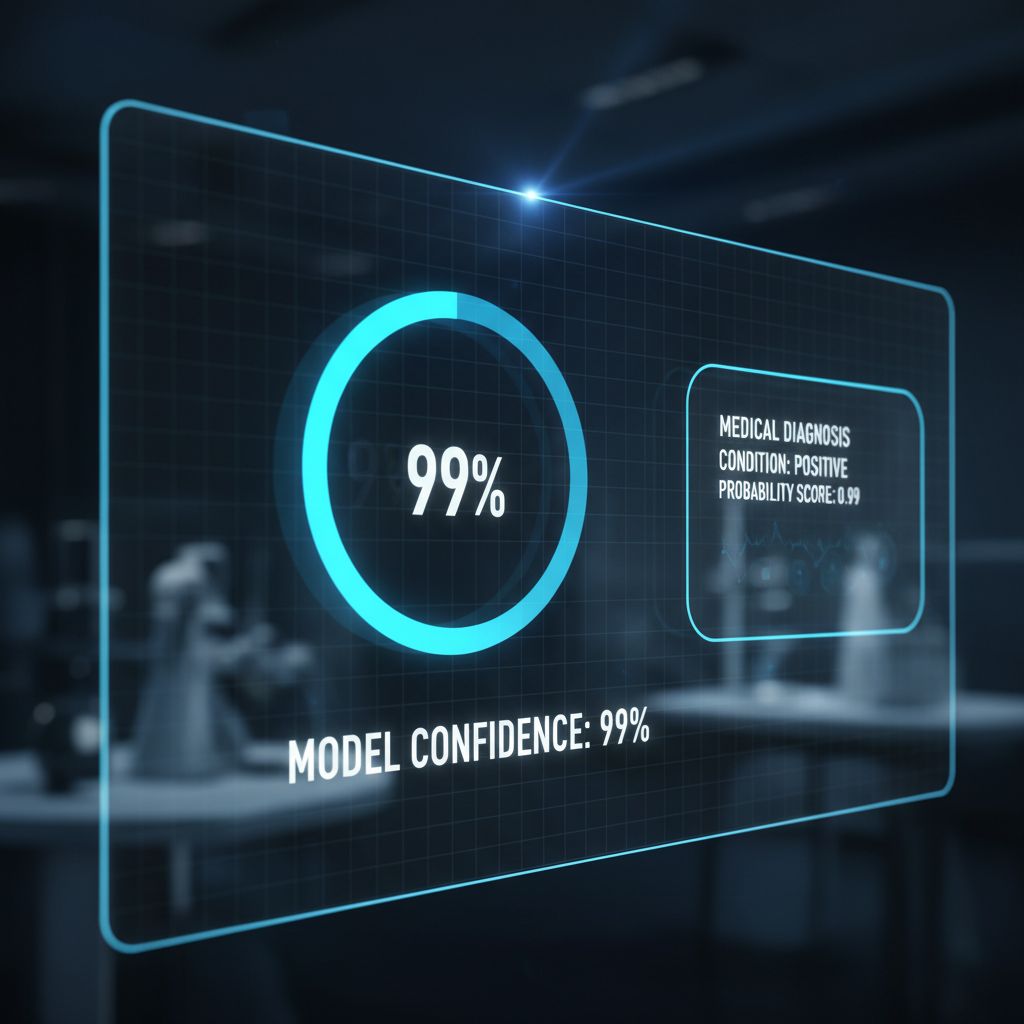

Explain Trust as Model Confidence vs Uncertainty

One experiment I’ve been exploring on thareja.ai is using AI decision-making concepts to explain human psychology.

Reframing trust as model confidence versus uncertainty helps clarify why we trust some people, systems, or information sources and hesitate around others.

Start a New Chat

Open thareja.ai and start a fresh conversation.

Starting fresh keeps the model focused on trust dynamics instead of pulling in unrelated context.

Switch Your AI Model

Click the ( + ) icon next to Automatic.

Select Claude for this experiment.

Why Claude?

Claude excels at:

Logical reasoning and abstraction

Explaining probabilistic thinking clearly

Connecting technical concepts to human behavior

These strengths make it well-suited for translating AI confidence metrics into relatable human terms.

Try This Prompt & Observe the Output

Prompt used:

“Explain trust as model confidence versus uncertainty. Compare how humans trust people or systems to how AI models express confidence, doubt, or ambiguity in predictions.”

Model Used:

Claude

AI Response (Excerpt)

“Trust works like model confidence. When a model has seen enough consistent data, its predictions come with high confidence. When inputs are unfamiliar or noisy, uncertainty rises. Humans operate similarly: we trust people and systems that behave predictably and consistently. When signals are mixed or past experiences conflict, uncertainty increases, and trust drops. Trust isn’t blind faith; it’s a probabilistic judgment based on past evidence.”

Why This Experiment Works

Makes trust feel measurable rather than emotional

Explains doubt as rational uncertainty, not weakness

Connects AI confidence scores to everyday decision-making

Different models may frame this differently. GPT might lean toward metaphor or storytelling, while Claude keeps the explanation structured and analytical. That contrast is what makes experimenting on thareja.ai so useful.

Happy Promoting!

Exclusive Member Deal - 20% OFF

Superpower AI Bundle Access 50+ Major LLMs

One Subscription. $20/mo $16/mo with code SUPERPOWER20

AI-Generated Image of the Day

Prompt: Children enjoying a snack break together. The setting is a cozy kitchen or a sunny backyard. The children, diverse in appearance, are seated around a small table or picnic blanket, sharing snacks like fruit slices, cookies, and juice boxes.

Tip: the more specific the better

Experience effortless model switching on thareja.ai. Nano Banana creates vibrant, eye-catching visuals built to halt thumbs in their tracks.

Meme of the Day

Question of the Day

What is the primary purpose of transfer learning in AI?

That’s it for today’s news in the world of AI!

If you have anything interesting to share, please reach out to us by sending us a DM on Twitter: @dthareja or email me at [email protected]. How was today's newsletter?

Feedback helps us improve!

Thanks for reading. Until next time!

p.s. if you want to sign up for this newsletter or share it with a friend or colleague, you can find us here.